At Consensus 2026, Cardano’s Charles Hoskinson said that “users should probably never have their private keys,” adding that “something should have the private keys for the users.”

He argued that the secure chips already embedded in iPhones, Android phones, and Samsung devices outperform those in Ledger and Trezor devices, and that most crypto users already carry better signing hardware in their pockets without realizing it.

Private key management has been a bottleneck to retail adoption since Bitcoin’s earliest days. Users have trouble with their 12- or 24-word seed phrase, usually forgetting it, photographing it, storing it in cloud notes, or losing it entirely.

Hardware wallets solved the extraction problem, since a Ledger or Trezor generates and stores keys that never leave the device in plaintext, while introducing a friction that mainstream users have consistently rejected.

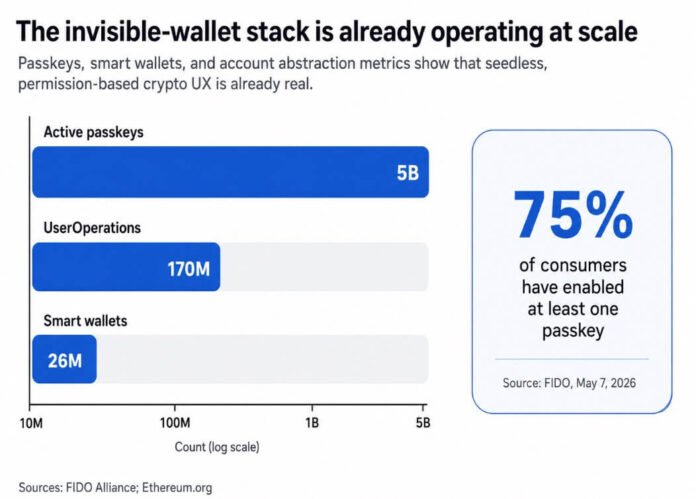

FIDO reported on May 7 that there are now 5 billion active passkeys globally, with 75% of consumers having enabled at least one. Users already accept device-bound, biometric-unlocked credentials as a normal part of authentication.

Coinbase’s smart wallet operationalizes this by letting users onboard without a recovery phrase, using Apple or Google passkeys, and by creating a non-exportable credential bound to secure hardware. Face ID or a PIN becomes the only interface the user needs.

Hoskinson is correct that mainstream phones contain serious security hardware. Apple’s Secure Enclave is a dedicated subsystem isolated from the main processor, and the firm says it protects sensitive data even if an attacker compromises the application-processor kernel.

Android’s Keystore system supports hardware-backed keys that can stay non-exportable and bind to a Trusted Execution Environment or secure element, with StrongBox implementations adding a dedicated CPU and further isolation requirements.

Samsung’s Knox system provides hardware-backed key protection through TrustZone, with DualDAR adding additional encryption layers for managed work profile data.

Hoskinson described the Knox work profile as “a separate operating system, separate circuits in the hardware.”

| Model | Where the key lives | Can the key be extracted? | Can malware still trick signing? | How transaction details are verified | Best use case |

|---|---|---|---|---|---|

| Seed phrase wallet | Derived from a 12- or 24-word recovery phrase, often stored in software or written down by the user | Yes, potentially — the secret can be exposed through bad storage, screenshots, cloud backups, phishing, or device compromise | Yes — if the wallet app or device is compromised, the attacker may trick the user or steal the secret outright | Usually through the wallet app interface on the same device | Low-friction onboarding, small balances, users comfortable with manual backup |

| Phone-based hardware-backed wallet | Inside a phone’s secure hardware, such as Apple Secure Enclave, Android Keystore/TEE/StrongBox, or Samsung Knox-backed protections | Generally no — the key can remain non-exportable and bound to device hardware | Yes — the key may stay protected, but a compromised app or OS could still try to get the device to sign something malicious | Through the phone UI, biometrics, PIN, and wallet prompts; security depends heavily on approval UX and intent verification | Everyday payments, routine self-custody, mainstream users, seedless/passkey-style onboarding |

| Dedicated hardware wallet | Inside a separate signing device such as Ledger or Trezor | Generally no — keys are designed to stay on the device and not leave in plaintext | Much harder, but not impossible — the key is better isolated, though attackers may still try to deceive the user into approving a bad transaction | On the wallet’s own trusted display / secure screen, physically separate from the phone or computer | Larger balances, long-term storage, users who want stronger isolation and a cleaner threat model |

Dedicated wallets hold an advantage

Phone-based secure hardware and dedicated signing devices operate on different threat models.

Ledger’s secure element drives a secure screen on the device itself, so users can verify transaction details even when the connected phone or laptop is under attack.

Trezor’s trusted display shows the transaction being signed, regardless of what the host machine displays. Trezor’s newer Safe 3, Safe 5, and Safe 7 models also include secure elements, so the critique that hardware wallets lack secure silicon is now outdated.

The shortcoming Hoskinson identified is accessibility, since Ledger and Trezor require a separate device, a companion app, and a signing flow that interrupts the transaction.

For everyday transaction volumes and routine self-custody, phones are plausible primary signers. For larger balances or users who want the strongest available threat model, dedicated devices with isolated displays keep the signing screen physically separate from the compromised machine, ensuring that the host’s malware cannot reach the display.

The integration of AI into payments adds a layer to the stack. AI agents need payment authority to be useful, but granting an agent access to a master private key is something most users would not knowingly accept.

The viable architecture is bounded delegation, consisting of an agent authorized to spend within preset limits, during a set period, without access to the credential that controls the broader wallet.

Base’s Spend Permissions documentation already frames AI-agent purchases as a core use case for recurring, limited-scope authorizations. Coinbase’s AgentCore Payments integration and AWS’s stablecoin agent payment tooling implement the same model of agents transacting under budget controls with full audit logs, without direct private-key access.

Ethereum’s EIP-4337 has enabled over 26 million smart wallets and 170 million UserOperations, and Pectra’s EIP-7702 extends programmable wallet behavior to externally owned accounts, enabling batching, gas sponsorship, recovery logic, and custom controls.

The infrastructure for permission-based, agent-compatible wallets already exists at a meaningful scale.

Your keys, but you never see them

“Not your keys, not your coins” was always as much a philosophical position as a technical one, and it assumes that users should handle cryptographic secrets directly.

Yet, this position may not survive contact with mass-market distribution. The more durable version of self-custody looks like biometric-based authentication and generating a non-exportable key in secure hardware, without seeing the raw key material.

What the user controls are spending caps, session keys, delegated allowances, recovery logic, and human-readable approval flows.

Apple’s secure intent mechanism lets hardware physically confirm user intent in a way even root or kernel software cannot spoof. Android Keystore supports per-operation authentication requirements.

Those capabilities relocate custody from “can you keep a secret” to “can you verify what you meant to authorize.”

The sharpest limitation in Hoskinson’s framing is that a compromised application or operating system may be unable to extract a hardware-backed key while still being able to use it on the device.

Key non-extractability and transaction security are separate guarantees, and recent history shows how catastrophically that difference can play out.

CertiK’s analysis of the Bybit incident found that attackers deceived signers into authorizing a malicious transaction. The attack succeeded even as the private key never left the hardware.

Chainalysis reported that impersonation scams grew over 1,400% in 2025, and AI-enabled scams produced 4.5 times the returns of traditional ones.

A phone-native self-custody model would hide private keys from users and simultaneously make transaction intent, approval UX, and spending limits the primary security surface.

Two trajectories

If wallets solve intent UX well enough to earn consumer trust via standardized spend caps, revocable delegation, and clear approval prompts, phone-primary self-custody could account for 70% to 85% of new retail users by 2028.

Seedless onboarding becomes the default, account abstraction moves from advanced feature to baseline expectation, and the seed phrase becomes a configuration option for users who want it.

If mobile signing incidents, phishing, compromised approval flows, or confusing recovery mechanics continue to produce high-profile losses, phone-based self-custody stalls at 20% to 35% of the retail market.

Users who lose funds due to a phone wallet manipulation attack describe it as a hack and return to exchanges.

The uncomfortable subtext in either trajectory is platform dependence. If self-custody moves into hardware embedded inside phones, then Apple, Google, Samsung, and major wallet SDK providers become quite powerful centers in crypto’s security architecture.

The model stays non-custodial in a technical sense, but wallet security depends more on OS APIs, enclave access policies, and app distribution rules.